Foreword

Due to some requirements, a full-text search engine may sometimes be required. This article will introduce the similarities and differences between several commonly used open source projects and their respective advantages and disadvantages in combination with my own project experience.

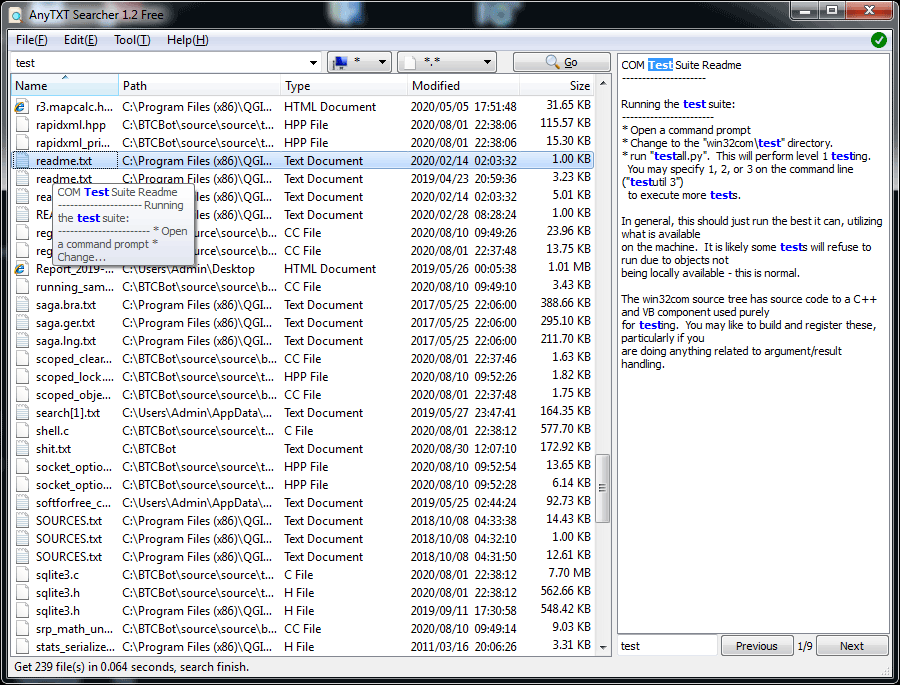

Best free content search tool.Anytxt Searcher is a free desktop full-text search tool.

What is a full-text search engine?

Definition in Wikipedia :

In text retrieval , full-text search refers to techniques for searching a single computer -stored document or a collection in a full-text database . Full-text search is distinguished from searches based on metadata or on parts of the original texts represented in databases (such as titles, abstracts, selected sections, or bibliographical references). In a full-text search, a search engine examines all of the words in every stored document as it tries to match search criteria (for example, text specified by a user). Full-text-searching techniques became common in online bibliographic databases in the 1990s. Many websites and application programs (such as word processing software) provide full-text-search capabilities. Some web search engines, such as google.com, employ full-text-search techniques, while others index only a portion of the web pages examined by their indexing systems.

From the definition, we can roughly understand the idea of full-text retrieval. For a more detailed explanation, let’s start with the data in life.

There are two types of data in our lives:

Structured data: refers to data with a fixed format or limited length, such as databases, metadata, etc.

Unstructured data: Unstructured data can also be called full-text data, which refers to data of indefinite length or no fixed format, such as mail, Word documents, etc.

Of course, there will be a third type in some places: semi-structured data, such as XML , HTML, etc. When required, it can be processed as structured data, or plain text can be extracted and processed as unstructured data.

According to two types of data classification, search is also divided into two types: structured data search and unstructured data search.

For structured data, we usually can be relational databases (MySQL , the Oracle , etc. ) of the table way to store and search can also be indexed.

There are two main methods for searching unstructured data, that is, full-text data:

Sequential scanning: You can also know its approximate search method through the text name, that is, query specific keywords in a sequential scanning manner.

For example, give you a newspaper and let you find where the text “RNG” appears in the newspaper . You definitely need to scan the newspaper from beginning to end, and then mark the sections where the keyword appeared and where it appeared.

This method is undoubtedly the most time-consuming and least effective. If the typesetting of the newspaper is small, and there are many sections or even multiple newspapers, it will be almost after you scan your eyes.

Full-text search: Sequential scanning of unstructured data is slow. Can we optimize it? Isn’t it enough to make our unstructured data somehow structured?

A part of the information in the unstructured data is extracted, reorganized to make it have a certain structure, and then the data with a certain structure is searched to achieve a relatively fast search.

This approach constitutes the basic idea of full-text retrieval. This part of information extracted from unstructured data and then reorganized is called an index.

Taking newspaper reading as an example, we want to follow the news of the NBA Finals. If we are all NBA fans, how can we quickly find newspapers and sections of NBA news?

The full-text search method is to extract keywords from all sections of all newspapers, such as ” Harden ” , ” NBA ” , ” James ” , ” Lake ” and so on.

Then index these keywords, and by indexing, we can correspond to the newspapers and sections in which the keywords appear. Note the difference between directory search engines.

Why use a full-text search search engine ?

Before, a colleague asked me why should I use a search engine? All of our data is in the database, and Oracle , SQL Server and other databases can also provide query retrieval or cluster analysis functions. Isn’t it sufficient to query directly through the database?

Indeed, most of our query functions can be obtained through database queries. If the query efficiency is low, you can also improve the efficiency by building database indexes, optimizing SQL , and even speeding up the return of data by introducing caches.

If the amount of data is larger, you can share the database and tables to share the query pressure. So why bother with a full-text search engine? We mainly analyze from the following reasons:

Type Of Data

The full-text index search supports the search of unstructured data, which can better search the unstructured text of any word or word group that exists in a large amount.

For example, Google , Baidu-like website searches, they all generate indexes based on keywords in web pages. When we enter keywords when searching, they will return all the pages that match the keyword or index; there are common items Search of application logs, etc.

For these unstructured data texts, relational database searches are not well supported.

Index Maintenance

In general, traditional databases and full-text search are very frustrating, because generally no one uses the data inventory text fields.Full-text retrieval requires scanning the entire table. If the amount of data is large, even the SQL syntax optimization will have little effect.

The index is established, but it is also very troublesome to maintain . The index will be rebuilt for insert and update operations.

When to use a full-text search engine:

- The searched data object is a large amount of unstructured text data.

- The number of file records reaches hundreds of thousands or millions or more.

- Supports a large number of interactive text-based queries.

- You need very flexible full-text search queries.

- There are special needs for highly relevant search results, but no relational database is available.

- There are relatively few requirements for different record types, non-text data manipulation, or secure transaction processing.

Lucene , Solr , ElasticSearch ? Which is better ?

The current mainstream search engines are probably: Lucene , Solr , ElasticSearch .

Their index establishment is based on the inverted index to generate the index, what is an inverted index?

Wikipedia: Inverted index ( also known as Inverted index ), often referred to as an inverted index, placed in an archive, or an inverted archive, is an indexing method used to store a word under a full-text search in a document Or a map of storage locations in a set of documents. It is the most commonly used data structure in document retrieval systems.

Lucene

Lucene is a Java full-text search engine written entirely in Java . Lucene is not a complete application, but a code base and API that can be easily used to add search functionality to your application. Lucene provides powerful features through a simple API.

Scalable high-performance indexes:

- More than 150GB / hour on modern hardware .

- Small RAM requirements, only 1MB heap.

- Incremental indexes are as fast as batch indexes.

- The index size is approximately 20-30 % of the index text size .

- Powerful, accurate and efficient search algorithm:

- Ranking search: First returns the best results.

- Many powerful query types: phrase query, wildcard query, proximity query, range query, etc.

- On-site search (eg title, author, content).

- Sort by any field.

- Multi-index search using merged results.

- Allows simultaneous updates and searches.

- Flexible faceting, highlighting, joining and grouping of results.

- Fast, memory efficient and error tolerant recommendations.

- Pluggable ranking models, including vector space models and Okapi BM25 .

- Configurable storage engine (codec).

Cross-platform solution:

- As Apache licensed under the open source software that allows you to use in commercial and open source program Lucene .

- 100 % -pure Java .

- Implementations in other programming languages available are index compatible.

Apache Software Foundation:

- Get support from the Apache community for open source software projects provided by the Apache Software Foundation .

- But Lucene is just a framework. To take full advantage of its features, you need to use Java and integrate Lucene in the program . It takes a lot of learning to understand how it works. Proficiency in Lucene is really complicated.

Solr

Apache Solr is an open source search platform built on a Java library called Lucene . It provides search functionality for Apache Lucene in a user-friendly way .

As an industry participant for almost a decade, it is a mature product with a strong and extensive user community.

It provides distributed indexing, replication, load balancing queries, and automatic failover and recovery. If it is properly deployed and well managed, it can become a highly reliable, scalable, and fault-tolerant search engine.

Many internet giants such as Netflix , eBay , Instagram and Amazon ( CloudSearch ) use Solr because it is able to index and search multiple sites.

The main feature list includes:

- research all

- protruding

- Faceted search

- Real-time indexing

- Dynamic cluster

- Database integration

- NoSQL features and rich document processing (such as Word and PDF files)

ElasticSearch

Elasticsearch is an open source ( Apache 2 license), RESTful search engine built on the Apache Lucene library .

Elasticsearch was launched a few years after Solr . It provides a distributed, multi-tenant capable full-text search engine with an HTTP web interface ( REST ) and schema-free JSON documents.

ElasticSearch ‘s official client libraries provide Java , Groovy , PHP , Ruby , Perl , Python , .NET and Javascript .

A distributed search engine includes an index that can be divided into shards, and each shard can have multiple copies.

Each Elasticsearch node can have one or more shards, and its engine can also act as a coordinator, delegating operations to the correct shard.

ElasticSearch can be extended with near real-time search. One of its main features is multi-tenancy. The main feature list includes:

- Distributed search

- Multi-tenant

- Analysis search

- Grouping and aggregation

Elasticsearch or Solr, Which is better ?

Some companies need to develop their own search framework, and the bottom layer depends on Lucene .So here we focus on which one is better? What the difference between them? Which one should you use?

Historical comparison

Apache Solr is a mature project with a large and active development and user community, and the Apache brand.Solr was first released to open source in 2006 , has long occupied the search engine space, and is the engine of choice for anyone who needs search capabilities.

Its maturity translates into rich functions, not just simple text indexing and searching; such as faceting, grouping, powerful filtering, insertable document processing, insertable search chain components, language detection, etc.

Solr has dominated the search field for many years. Then, around 2010 , Elasticsearch became another option on the market.At that time, it is far from Solr less stable, no Solr function of depth, there is no thought to share, brand and so on.

Although Elasticsearch is young, it also has some advantages of its own. Elasticsearch is built on more modern principles, is aimed at more modern use cases, and is built to make it easier to handle large indexes and high query rates.Also, because it is too young to have a community to work with, it is free to move forward without the need for any consensus or cooperation with other people (users or developers), backward compatibility, or any other more mature software usually Must be processed.Therefore, it exposed some very popular features before Solr ( for example, Near Real-Time Search) .

Technically speaking, the ability of NRT search does come from Lucene , which is the basic search library used by Solr and Elasticsearch .Ironically, because Elasticsearch first exposed NRT search, people linked NRT search to Elasticsearch .

Although Solr and Lucene are both part of the same Apache project, one would first expect Solr to have such demanding features.

Comparison of feature differences

Both search engines are popular and advanced open source search engines. They are all built around the core underlying search library Lucene , but they are different.

Like everything, each has its advantages and disadvantages, and each may be better or worse depending on your needs and expectations.

Comprehensive comparison

In addition, we analyze from the following aspects:

① Popular trends in recent years

Let ’s take a look at Google search trends for both products . Google Trends shows that Elasticsearch is very attractive compared to Solr , but this does not mean that Apache Solr is dead.Although some may not think so, Solr is still one of the most popular search engines, with strong community and open source support.

② Installation and configuration

Compared to Solr , Elasticsearch is easy to install and very lightweight. In addition, you can install and run Elasticsearch in minutes .

However, this ease of deployment and use can become a problem if Elasticsearch is not properly managed.

The JSON- based configuration is simple, but it is not for you if you want to specify a comment for each configuration in the file.

Overall, if your application uses JSON , then Elasticsearch is a better choice.

Otherwise, use Solr because its schema.xml and solrconfig.xml are well documented.

③ community

Solr has a larger, more mature community of users, developers, and contributors. Although ES has a small but active user community and a growing community of contributors.

Solr is a true representative of the open source community. Anyone can contribute to Solr and choose new Solr developers (also known as committers) based on their merit .

Elasticsearch is technically open source, but spiritually less important. Anyone can see the source, anyone can change it and contribute, but only Elasticsearch employees can actually make changes to Elasticsearch .

Solr contributors and committers come from many different organizations, while Elasticsearch committers come from a single company.

④ maturity

Solr is more mature, but ES is growing rapidly and I think it is stable.

⑤ Documents

Solr scored high here. It is a very well documented product with clear examples and API use case scenarios.

Elasticsearch ‘s documentation is well organized, but it lacks good examples and clear configuration instructions.

Sum Up

So, choose Solr or Elasticsearch ? Sometimes it is difficult to find a clear answer. Whether you choose Solr or Elasticsearch , you first need to understand the correct use cases and future requirements, and summarize each of their attributes.

Keep these points in mind:

- Because of its ease of use, Elasticsearch is more popular among new developers. However, if you are already used to working with Solr , keep using it, as there are no specific advantages to migrating to Elasticsearch .

- If you need it to process analytic queries in addition to search text, Elasticsearch is the better choice.

- If you need a distributed index, you need to choose Elasticsearch . For cloud and distributed environments that require good scalability and performance, Elasticsearch is the better choice.

- Both have good business support (consulting, production support, integration, etc.).

- Both have great operational tools, although Elasticsearch has attracted DevOps crowds more because of its easy-to-use API , so you can create a more vivid tool ecosystem around it.

- Elasticsearch dominates open source log management use cases, and many organizations index their logs in Elasticsearch to make them searchable. Although Solr can also be used for this purpose now, it just misses the idea.

- Solr is still more text-oriented. Elasticsearch , on the other hand, is often used for filtering and grouping, analyzing query workloads, not necessarily text searches. Elasticsearch developers invest a lot of effort at the Lucene and Elasticsearch levels to make such queries more efficient ( reducing memory footprint and CPU usage ) . Therefore, Elasticsearch is a better choice for applications that require not only text search but also complex search time aggregation .

- Elasticsearch is easier to get started with one download and one command to start everything. Solr has traditionally required more work and knowledge, but Solr has made great strides in eliminating this recently, and now it only takes effort to change its reputation.

- In terms of performance, they are roughly the same. I say ” roughly ” because no one has done a comprehensive and unbiased benchmark. For 95 % of the use cases, either option will be good in terms of performance, and the remaining 5 % will need to test both solutions with their specific data and specific access patterns.

- In terms of operation, Elasticsearch is relatively simple to use, it has only one process. Solr relies on Apache ZooKeeper in its fully distributed deployment model SolrCloud similar to Elasticsearch . ZooKeeper is super mature, super widely used, etc., but it is still another active part. That said, if you are using Hadoop , HBase , Spark , Kafka or some other newer distributed software, you may already be running ZooKeeper somewhere in your organization .

- Although Elasticsearch has a built-in ZooKeeper- like component Xen , ZooKeeper can better prevent the terrible split-brain problem that sometimes occurs in Elasticsearch clusters. To be fair, Elasticsearch developers are aware of this problem and are working to improve this aspect of Elasticsearch .

- If you like monitoring and metrics, then using Elasticsearch , you will be in paradise. This thing has more indicators than people who can squeeze in Times Square on New Year’s Eve! Solr exposed key metrics, but not as much as Elasticsearch .